My First Claude Code Experience

A 7-Day, 68-Session Journey

Intro

Having heard a lot of good things about Claude Code lately, I’ve started to compose a list of things it might be useful to try it on, for when I inevitably decide to sign up for Pro subscription.

For those unaware, it’s basically a CLI tool hooked to an LLM (Claude Sonnet 4 in this case), with the LLM being aware that it can read and edit files, run commands and such.

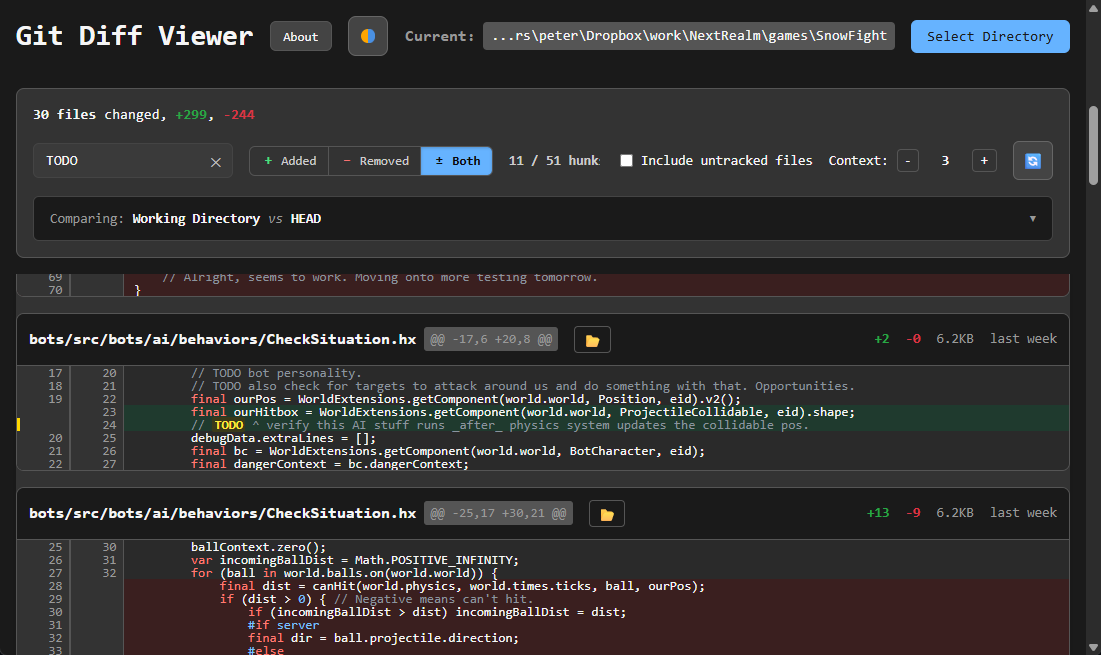

I used it to make a git diff viewer app.

git diff Needs A Frontend?

As I was crawling back from the mess in my repository in my current game project, I finally went to commit it, but before I do that on such a big and messy changes, I like to review that. Especially since I often leave “TODO” notes that are meant to be done as part of the commit. Once I commit, they become part of all the other old TODO notes…

Hey, I know it’s not a great system, but it works for me, alright, leave me alone.

Anyway, I simply like to go through and check what new added TODOs I have, as last pass sort of thing. But it’s difficult to find that. Global search in IDE won’t help (will find it all), I can never remember the git command for it (and if I do it doesn’t have syntax highlighting)… So that was my first project, a Git Diff Viewer. Yay.

Idea was, it’s simple, will take me half a day, and I can move on with my life having another neat tool in my box. It took 39 hours across 8 days.

- First day spent with chat LLMs making a NodeJS tool that created a static HTML with the output, neat and pretty. Then came Claude Code.

- 68 conversations over 7 days.

- 4,050 messages exchanged, 1806 from me (includes stuff like

/clear). - Claude did 600 edits, wrote TODOs for itself 369 times, read files 143 times and used bash 62 times.

- Never ran out of tokens (Pro sub).

ccusageclaims it was 14,629 input 190,338 output tokens with 3,383,445 cache create, 44,443,980 cache read totaling 48,032,392 tokens which would have cost $28.92 via API (so the $20 Pro subscription it already paid for itself).

My Experience

I didn’t try anything fancy. I didn’t read much about how best to use Claude Code. I didn’t even make a CLAUDE.md. I kept it simple, fresh conversation for each new task, give it tiny amount of context and the file we need to do stuff on. Review, commit, repeat.

Almost everything was quick, rarely requiring more than a few replies from me, if any at all. Some more complex issues took several start-from-scratch attempts, and around 3 of them required me to dive deep and spend time understanding the code, researching and debugging. You know, actual programming.

What Worked Really Well

I always hate doing UI. Now I don’t have to. And if I don’t like it, I can just tell it to change it. Messy CSS? Who cares, I don’t have to work with it, that’s Claude’s job. Not getting annoyed because I can’t center a div is awesome.

Talking about HTML and centering issues, there were some in this project too, and I was pleasantly surprised at how fast iterating on it was. I tell it what’s wrong, it tries stuff, I see change immediately. I tell it to try less silly approach (I’m not an expert in CSS, but adding 10 lines to fix one padding issue doesn’t sound good), and it does. One time we got stuck, and when I pasted the actual generated HTML structure, it figured out the issue right away, pretty awesome.

I took this project way further than I expected. It was fun to watch my ideas manifest so fast.

Once I told it to be creative, and it was. I was basically just playing with it at that point, but look at that neat dark/light theme toggle button. All done in a few minutes.

At some point I changed from using CSS media queries for dark/light theme to CSS variables approach so I could dynamically change the theme. It required adapting all the components, which is exactly the task you give an LLM and go make a coffee. And it worked.

It writes some code you don’t like? Ask it. That I really liked. As a research and learning tool, if you use it as such, it’s surprisingly helpful, because it has all the context it needs to give you what you need to learn. No more getting stuck on issues just because you don’t know what to google. Well, as long as what you are doing isn’t something obscure enough for the LLM to have no clue. Then you are on your own.

Doing passes of “what could be improved in this code”, as sort of regular cleanup was nice. It did try to come up with bunch of useless/wrong/dangerous suggestion, but often I managed to get it to refactor the code, move it around or make it tidier, which is self-sustaining cycle as it will make it easier for the LLM to work on the code later.

When I gave the original NodeJS script to Claude, it moved all the git calls to Rust, since I picked Tauri as packager. That surprised me, but it makes sense. I don’t know any Rust, but in this project it just worked (almost) without issues. Few times I asked it to explain the code to me, that plus Rust’s legendary error messages were actually quite educative.

All this makes it easy (and fun!) to try new things. Or do things you wouldn’t have time for, like messing with UI.

And, for me, I love how it allows me to just talk my issues out with something that has at least a bit of context about the project. Makes me procrastinate less, get stuck less, and I finally have a co-programmer to bounce ideas off. It sounds silly, since it’s just an LLM, but it’s not replacing a human for me (I almost never have any humans for my projects), what it is, is a pretty good stand-in though.

What Did Not Work Well

I don’t like how far away from the code I got. On one hand it’s superb to get stuff done so fast and without having to acquire domain specific knowledge. On the other hand it’s not sustainable for bigger projects, and slows down learning and acquiring new knowledge. Still, I feel it’s net positive in the end.

Some things can be hit and miss. Claude has Svelte 5 knowledge, and I used it on purpose, even though every LLM tried to convince me to use React (because they know it better). It managed it pretty well, but did make some errors. Can’t rely on it actually “knowing” the domain well. And sometimes it mixed it up with old knowledge, e.g. used deprecated event dispatcher instead of event callbacks.

It can easily lead you down the wrong path, and it takes just a little hint to steer it back. But to be able to give it, you need to know what you are doing.

It’s definitely not “smart”. It often solves stuff in non-ideal way, even if it has the knowledge and the context. I had it fix some warnings and errors, which it did, but I didn’t understand the code, so I prompted Claude to explain it to me. That led me down to realizing it didn’t understand the big picture, and a better and nicer fix was possible. Did it fix the error? Yes. Is it maintainable in the long term? No.

The resulting code isn’t great. Bigger projects would require better refactoring. But it’s also not that bad, and it can sort of be improved on by prompting it, so let’s call it “good enough”.

What I Would Like Next

Some of the things I was doing, like the clean up passes, could easily be automated. Each commit, have some LLM look into cleanup, refactoring, etc. and have it pop up with suggestions/PRs if it deems it to be a good idea. It would have to be good at it though. A sort of agentic LLM wearing multiple hats doing things for me.

I could imagine running some local LLM, or well maybe even Claude if tokens permit, to analyze prompts, responses, for common knowledge that’s not yet stored anywhere. A sort of automatic project-prompt keeper. And it could be used to improve prompts before sending them, or pointing out when they are too vague or are missing required context.

Tests. Having a proper test suite would make some bigger changes much easier. I never tried testing UI stuff though, and for this project it doesn’t matter much, but I did find myself manually re-testing the core functionality a few times. Would prompting the LLM to make such tests result in something usable, or just a waste of time for this project? I’m unsure.

Maybe I will try that CLAUDE.md thing. For bigger projects at least.

Conclusion

Claude Code is Rubber Duck 2.0 on steroids with magic power.

I have (by which I mean Claude did) composed some data from conversations for this project. You can find it here, if you are interested.